Cost monitoring in Google BigQuery can be a difficult task, especially within a growing organization and lots of (independent) stakeholders that have access to the data. If your organization is not using reserved slots (flat-rate pricing) but is billed by the number of bytes processed (on-demand pricing), costs can get quickly out of hand, and we need the means to investigate or "debug" the BigQuery usage in order to understand: - who ran queries with a high cost - what were the exact queries - when did those queries run (and are they maybe even running regularly)

Previously, we had to manually set up query logging via Stackdriver as explained in the article

Taking a practical approach to BigQuery cost monitoring

but in late 2019 BigQuery introduced

INFORMATION_SCHEMA views

as a beta feature that also contain data about BigQuery jobs via the

INFORMATION_SCHEMA.JOBS_BY_* views

and became generally available (GA) at 2020-06-16

Examples

SELECT

creation_time,

job_id,

project_id,

user_email,

total_bytes_processed,

query

FROM

`region-us`.INFORMATION_SCHEMA.JOBS_BY_USER

SELECT * FROM `region-us`.INFORMATION_SCHEMA.JOBS_BY_PROJECT

SELECT * FROM `region-us`.INFORMATION_SCHEMA.JOBS_BY_ORGANIZATION

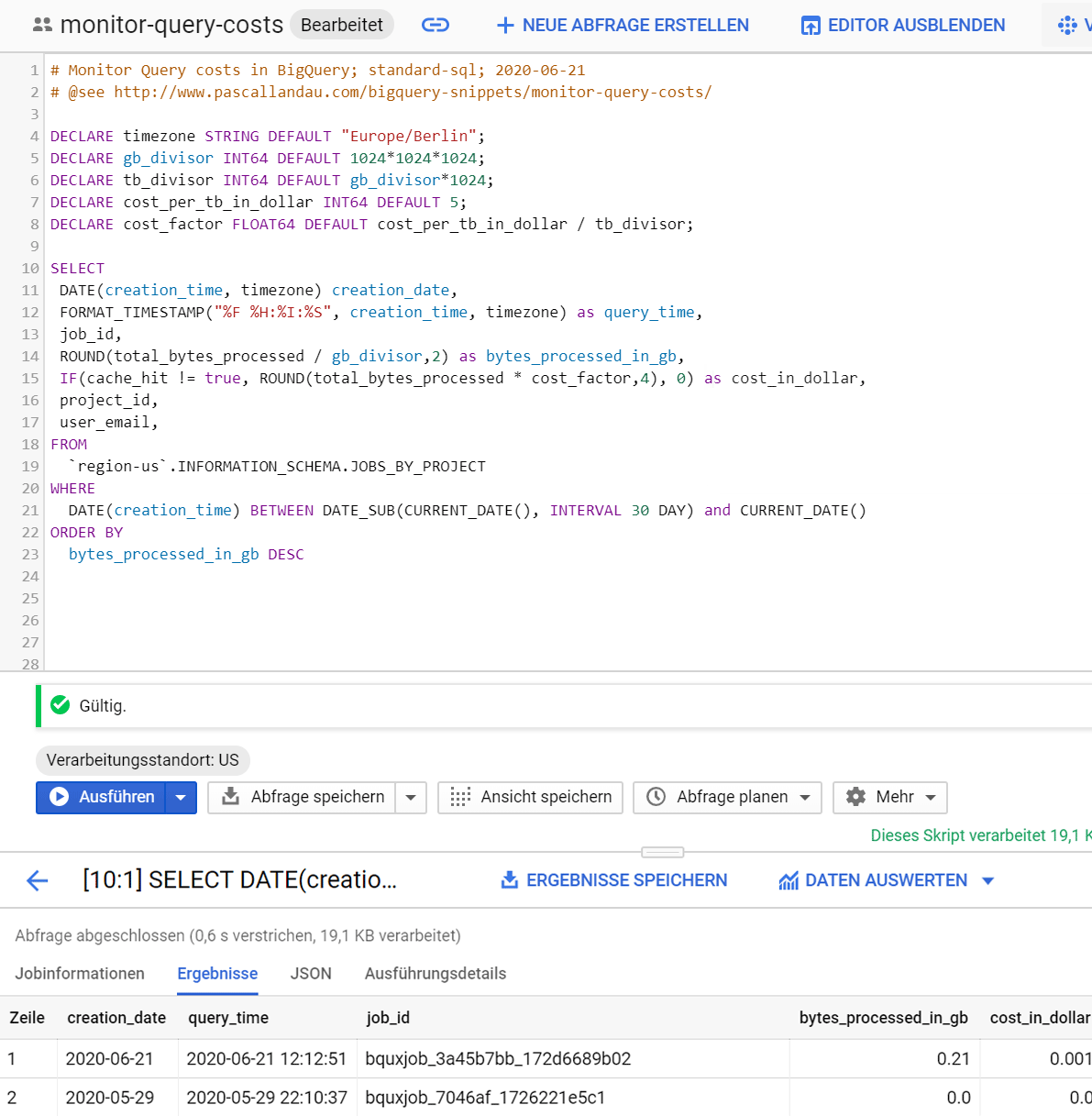

Working Example

- this query will select the most interesting fields in terms of cost monitoring from the

INFORMATION_SCHEMA.JOBS_BY_USERview for all jobs that have been run in region US in the currently selected project - the

cost_in_dollaris estimate by calculating thetotal_bytes_processedin Terabyte and multiplying the result with $5.00 (which corresponds to the cost as of today 2020-06-22). Also, we only take those costs into account if the query was not answered from the cache (see thecache_hit != truecondition) - the

creation_timeis converted to our local timezone - the results are restricted to the past 30 days by using the

WHEREclause to filter on the partition columncreation_time - feel free to replace

JOBS_BY_PROJECTwithJOBS_BY_USERorJOBS_BY_ORGANIZATION

Run on BigQuery

Notes

While playing around with the INFORMATION_SCHEMA views I've hit a couple of gotchas:

- the different views require different permissions

- the views are regionalized, i.e. we must prefix the region (see region-us in the view specification) and

must run the job in that region (e.g. from the BigQuery UI via

More > Query Settings > Processing location)

- it is not possible to mix multiple regions in the query, because a query with processing location US

can only access resources in location US. Though it would be very helpful for organizations that actively use different locations,

something like this is not possible:

SELECT * FROM

(SELECT * `region-us`.INFORMATION_SCHEMA.JOBS_BY_ORGANIZATION)

UNION ALL

(SELECT * `region-eu`.INFORMATION_SCHEMA.JOBS_BY_ORGANIZATION)

- data is currently only kept for the past 180 days

- the JOBS_BY_USER view seems to "match" the user based on the email address. My user email adress is a @googlemail.com

address; in the user column it is stored as @gmail.com. Thus, I get no results when using JOBS_BY_USER

- JOBS_BY_USER and JOBS_BY_PROJECT will use the currently selected project by default. A different

project (e.g. other-project) can be specified via

SELECT * FROM `other-project.region-us`.INFORMATION_SCHEMA.JOBS_BY_PROJECT

- the full query is not available for JOBS_BY_ORGANIZATION

Use Cases

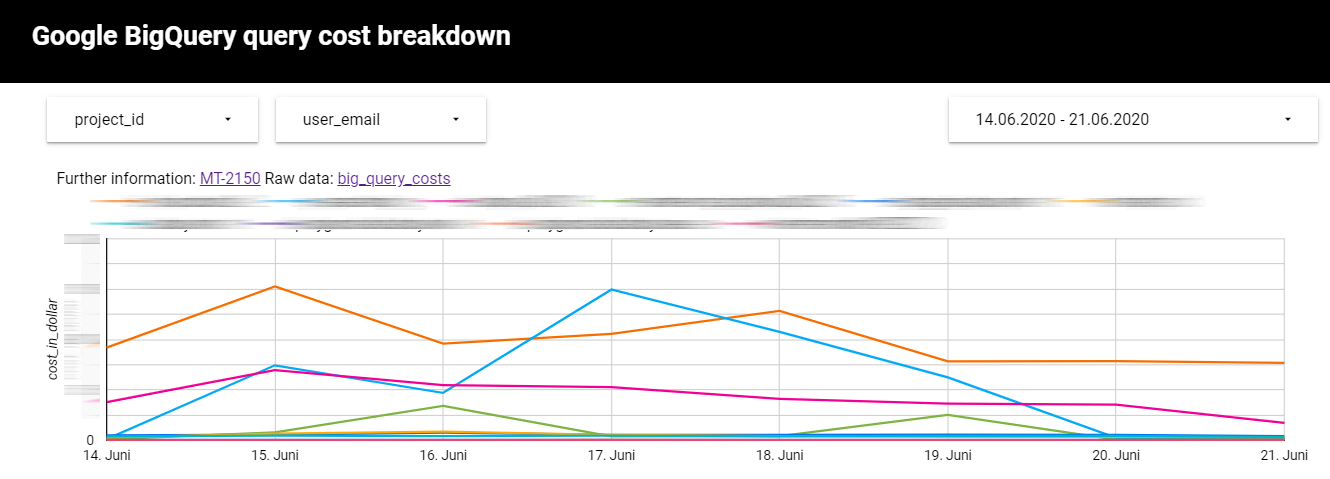

I use this approach in our organization to set up a view based on INFORMATION_SCHEMA.JOBS_BY_ORGANIZATION

that is then used as a data source for Google DataStudio.

This allows me to get a quick high level overview

over all query costs and further enables me to drill down deeper if I need to. I can even find the exact

queries via their job_id.

Wanna stay in touch?

Since you ended up on this blog, chances are pretty high that you're into Software Development (probably PHP, Laravel, Docker or Google Big Query) and I'm a big fan of feedback and networking.

So - if you'd like to stay in touch, feel free to shoot me an email with a couple of words about yourself and/or connect with me on LinkedIn or Twitter or simply subscribe to my RSS feed or go the crazy route and subscribe via mail and don't forget to leave a comment :)

Subscribe to posts via mail